Test Automation is significant and growing-yet I have read many forum comments and blog posts about Test Automation not delivering as expected. It’s true that test automation can improve reliability while minimizing variability in the results, speed up the process, increase test coverage, and ultimately provide greater confidence in the quality of the software being tested, but in (too) many cases the benefits never fully materialize.

A significant part of the problem results from misconceptions about software test automation. Many view the automation of tests as a low tech activity that the testers can take care of on top of their test design efforts. Unfortunately, many test tools on the market encourage this vision by making automation “friendly” with nice looking features and support for end users to do their own automation. However, automation is in essence software development—you try to program a computer to do something that you do not want to do yourself anymore. As with any software, automated tests tend to be complex and they can break when something unanticipated happens.

Implementing test automation with the wrong assumptions will produce poor results. Poor results from automation will lead to more misconceptions. Good automation provides optimum productivity to the software testing effort; hence, it leads to higher quality software releases. In order to help make test automation better for everyone, I have attempted to address the most common misconceptions about software test automation.

“Automation is Good!” vs. “Automation is Bad!”

Both of these statements would be misconceptions in my view. Automation should be a mere instrument for the tester, neither good nor bad. For most tests, when the tests are well-designed, it is not even visible whether the execution is automated or not.

Automation is not a silver bullet, it also presents some challenges. Automating a bad test doesn’t improve its quality; it just makes it run faster. I recommend that you define the test methodology, then choose the right enabling technology to help you implement the methodology. The methodology you choose should provide the following:

- Visibility

- Reusability and Scalability

- Maintainability

After the test methodology and tools are set, the next step is to put the right people in place with the proper skills and training to do the work. Testing is often underestimated as a discipline. In an average project, most attention is given to system requirements and programming. Testing is seen as a supporting activity, and not much effort or money is invested in building or upgrading the testing team. To be effective, testers must have a deep understanding of the system and subject matter under test and should be able to think outside the box in order to find the subtler bugs. Testers also need to be able to work well with others, even under stressful project conditions. I often encounter testers who have not received training in even the most basic testing techniques. This is unfortunate since we’re talking about a small investment that can have a substantial impact on quality and productivity.

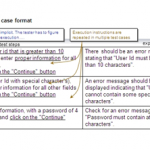

Test automation visibility provides measurability and control over the software development process, which keeps it manageable. Test automation visibility by itself does not provide high test quality; it merely enables us to see how well the test designers are trained. Addressing the training issues will help in addressing the test case quality issues. With good visibility established, you can make effective management decisions about if, when, and how to do training and auditing to address the quality of tests.

The key to automation success is to focus your resources on the test production; that is, to improve the quantity and quality of the tests, not to spend too many resources on automation production.

Reusability and scalability of test automation improves test productivity. However, productivity should be defined by (1) the quantity of tests (driven by reusability and scalability), and (2) the quality of tests (understanding of what the tests are actually doing helps improve the tests qualitatively).

When test automation is reusable and scalable, the issue of quantity is resolved; when test automation is highly maintainable, the cost of ownership is minimized, making the overall testing effort more cost-effective.

Automation is Easy

I’m still waiting to see my first “easy” Automation project. Development is hard, testing is harder, automated testing is the hardest. If you can do automated testing well, you’re in an enviable position, even at the business level. If you don’t do it well, be ready to lose time and money.

Many commercial testing tools are promoted and bought on the premise of “so easy a cave man can do it”. The primary features of these types of tools are automating the capture and replay of manual test cases. Most deliver on the easy to create part, but too often the results are inherently brittle and difficult to maintain. When asked how many test cases are actually automated, most organizations report figures in the range of 20-30%—or less. This has to do with the amount of work required to automate a test case, and to keep the automation up to date with the latest system changes, as well as the sheer amount of test cases with a script for each test case.

Good test design and development are the critical and most often overlooked aspects of test automation. Few automation management and automation playback tools support test development well. To solve an automation problem, define the test methodology first and then choose the right enabling technology to help you implement the methodology.

Good Automated Testing is Automating Good Manual Tests

Most manual tests are not particularly suitable as a source for maintainable automation. Manual tests often mix global scope with details, which for a manual tester is not a big problem, but for an automated tester it becomes a maintenance liability, where changes in such details in the application under test could uncontrollably impact tests that do not necessarily care about them.

Even if successful, automating manual test designs one by one is expensive. For manual testing, test engineers typically design, write and execute the manual tests, often in a high level of detail. Automation requires additional skills and expertise, typically an automation engineer, coder and test engineer-the coder role may be eliminated or at least greatly minimized using a keyword framework. A lot of work is required to adapt the manual tests for automation, and especially in large and diverse teams, test engineers don’t all write test the same.

Automating manual tests also results in defining test cases around automation rather than test case development. This inhibits creativity and results in bland tests. I prefer test cases to be the outcome of test development, not the input. It’s much better to create automated test products as a whole- where one test case sets up the situation for the next one.

Automation is the Same as Programming

I would hope not. Test automation is not a programming challenge—it is primarily a test design challenge. In a good test design, you should not even notice which tests are executed automatically and which aren’t. An experienced programmer will typically be good at factoring, something which can contribute greatly in high level test design. This makes having programmers and testers in the same team (and with less harnessed roles) very effective in designing automated tests.

To Have More Automation, You Need More Engineers

This is not even true for system development. It can be best be likened to “The mythical man-month” — it takes one woman nine months to birth a child, but nine women cannot birth a child in one month. For test automation, having more engineers rarely has a lasting positive effect. Initially a lot of tests can be automated, but when the system under test changes, as is frequently the case in rapid development environments, a lot of test maintenance is required. The result will be more time needed to maintain tests than create new ones, and testing that starts to slow down. The typical solution is more engineers, but there are limits to adding resources. More up front planning and development and thinking about what you do is the solution, particularly modularization of test cases. Good modularization allows you to focus on specific well-defined scope, and reach a lot more depth in the process.

Automation is Best for Requirements Based Testing

Requirements are almost the enemy of good tests. They can lead to lazy test development when tests are created for only the requirements on a 1 by 1 basis.

Automated Tests are Dumb by Definition

A common impression is that automated tests are by definition dumb, compared for example to exploratory testing. They often are, in particular when 1 on 1 based on requirements or specifications, but they don’t have to be. It is the responsibility of the testers (the team) to ensure tests are not dumb. Automation is not an excuse. Testers have a lot of experience that can be put to good use, and face it, unexpected situations are a common source of problems in systems that you won’t find with tests based on requirements. Automation is a good way to test a lot more unexpected parameters, and good modularization (test organization) allows you to focus on specific well-defined scope, and reach a lot more depth in the process.

Automation is for Regression Testing

In my opinion, this is a good example of the “carriage without a horse” view. In some cases it may be ok to make a selection of already developed tests to use for regression, but I don’t see “regression” as a good angle for effective test design. Certain test design elements, like good breakdown in modules and good flow in modules, help automation, but automation is not a test design criterion.

If There are Automation Problems, The Tests Should be Debugged

“Thou should never debug tests!” If observed results are not the expected results, it is not an automation problem. Either the tester or the developer was off-track with the system requirements. If the application under tests isn’t working, go back and run lower-level tests first. If your platform uses keyword actions, and they aren’t working, it’s likely they weren’t tested and debugged prior to being used in tests.

You Need Criteria, like ROI, to Decide Which Tests to Automate

This is one of the most commonly found statements on test automation. However, in a good testing project (my definition of ‘good’), I like to regard automation as a supporting activity. I prefer to see ROI metrics focused on tests (and test development) than on their automation. It helps to think of the ROI equation as having the benefits on one side, and the costs on the other. For the benefits, consider the productivity, both in quantity and quality of tests. For the cost side of the equation, think about the reusability, scalability and maintainability of the tests.

Keywords are A Method

Keywords are nothing more than a format to write tests in. They can be a good basis for a method for test development and test automation, but they’re not much of a method in themselves. Keyword driven testing is a testing technique that separates much of the programming work of test automation from the actual test design. Nowadays I consider test modules (or a similar concept) as a more essential element in effective test development and successive automation.

Keywords Will Solve Your Test Automation Problems

Keywords are not a magic wand. Some of the worst automation projects I have seen were in fact done with keywords. Keywords provide a convenient interface for non-technical users, and encourage abstraction from unneeded details; however, in themselves they don’t help much if you don’t pay attention to how you organize your tests. Keywords in fact can work as an amplifier: good practices get a better pay-off; bad practices have more dire consequences than they may have without keywords.

When Using Actions, We Should Predefine Them

Some companies form a group that defines actions, while others let engineers define them. However, it is about the tests, not about the keywords (or the automation). Actions, with their keywords and arguments, should be a by-product of the tests. But it is important to standardize the naming conventions for actions. A couple of guidelines:

- Always start with a verb followed by a subject, like “check balance.”

- Standardize the verbs, so always use “check” and not “verify.”

Testing and Automation Should be Part of the System Development Life Cycle

“In general, I feel there should be 3 product cycles”:

- The system under test

- Tests

- Automation (of keywords)

It is common to have testing and automation activities positioned as part of a system development life cycle, regardless of whether that is a waterfall or an agile approach. In an agile project, one team will typically be responsible for all three of these product cycles, and their relations.

System development follows any SDLC, traditional or agile model. Test development includes test design, test execution, test result follow up, and test maintenance. Automation focuses solely on the action keywords, interpreting actions, matching user or non-user interfaces, researching technology challenges, etc. To create software, and to make it work, requires specific skills and interests. It takes experience and patience to find the cause of the problem. This is even more the case with test automation than it is other software.

Conclusion

We can differ on what is true and false regarding assumptions, but we can all avoid making the wrong ones by a tried and true process: Think before you do, and pay attention to test design and organization, not just to the technology.

The key to automation success is to focus your resources on the test production; that is, to improve the quantity and quality of the tests, not to spend too many resources on automation production.