When Netflix decided to enter the Android ecosystem, we faced a daunting set of challenges:

1. We wanted to release rapidly (every 6-8 weeks).

2. There were hundreds of Android devices of different shapes, versions, capacities, and specifications which need to playback audio and video.

3. We wanted to keep the team small and happy.

Of course, the seasoned tester in you has to admit that these are the sort of problems you like to wake up to every day and solve. Doing it with a group of fellow software engineers who are passionate about quality is what made overcoming those challenges even more fun.

Release rapidly

You probably guessed that automation had to play a role in this solution. However automating scenarios on the phone or a tablet is complicated when the core functionality of your application is to play back videos natively but you are using an HTML5 interface which lives in the application’s web view.

Verifying an app that uses an embedded web view to serve as its presentation platform was challenging in part due to the dearth of tools available. We considered Selenium, Android Native Driver, and the Android Instrumentation Framework. Unfortunately, we could not use Selenium or the Android Native Driver, because the bulk of our user interactions occur on the HTML5 front end. As a result, we decided to build a slightly modified solution.

Our modified test framework heavily leverages a piece of our product code which bridges JavaScript and native code through a proxy interface. Though we were able to drive some behavior by sending commands through the bridge, we needed an automation hook in order to report state back to the automation framework. Since the HTML document doesn’t expose its title, we decided to use the title element as our hook. We rely on the onReceivedTitle notification as a way to communicate back to our Java code when some Javascript is executed in the HTML5 UI. Through this approach, we were able to execute a variety of tasks by injecting JavaScript into the web view, performing the appropriate DOM inspection task, and then reporting the result through the title property.

With this solution in place, we are able to automate all our key scenarios such as login, browsing the movie catalog, searching, and controlling movie playback.

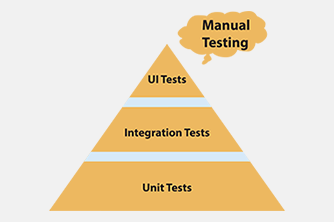

While we automate the testing of playback, the subjective analysis of quality is still left to the tester. Using automation we can catch buffering and other streaming issues by adding testability in our software, but at the end of the day we need a tester to verify issues such as seamless resolution switching or HD quality which are hard to achieve today using automation and also cost prohibitive.

We have a continuous build integration system that allows us to run our automated smoke tests on each submit on a bank of devices. With the framework in place, we are able to quickly ascertain build stability across the vast array of makes and models that are part of the Android ecosystem. This quick and inexpensive feedback loop enables a very quick release cycle as the testing overhead in each release is low given the stakes.

Device diversity

To put device diversity in context, we see around 1000 different devices streaming Netflix on Android every day. We had to figure out how to categorize these devices in buckets so that we can be reasonably sure that we are releasing something that will work properly on these devices. So the devices we choose to participate in our continuous integration system are based on the following criteria.

We have at least one device for each playback pipeline architecture we support (The app uses several approaches for video playback on Android such as hardware decoder, software decoder, OMX-AL, iOMX).

We choose devices with high and low end processors as well as devices with different memory capabilities.

We have representatives that support each major operating system by make in addition to supporting custom ROMs (most notably CM7, CM9).

We choose devices that are most heavily used by Netflix Subscribers.

With this information, we have taken stock of all the devices we have in house and classified them based on their specs. We figured out the optimal combination of devices to give us maximum coverage. We are able to reduce our daily smoke automation devices to around 10 phones and 4 tablets and keep the rest for the longer release wide test cycles.

This list gets updated periodically to adjust to the changing market conditions. Also note that this is only the phone list, we have a separate list for tablets. We have several other phones that we test using automation and a smaller set of high priority tests, the list above goes through the comprehensive suite of manual and automation testing.

To put it another way, when it comes to watching Netflix, any device other than those ten devices can be classified with the high priority devices based on their configuration. This in turn helps us to quickly identify the class of problems associated with the given device.

Small happy team

We keep our team lean by focusing our full time employees on building solutions that scale and automation is a key part of this effort. When we do an international launch, we rely on crowd-sourcing test solutions like uTest to quickly verify network and latency performance. This provides us real world insurance that all of our backend systems are working as expected. These approaches give our team time to watch their favorite movies to ensure that we have the best mobile streaming video solution in the industry.