I’ve been reviewing a lot of test plans recently. As I review them, I’ve compiled this list of things I look for in a well written test plan document. Here’s a brain dump of things I check for, in no particular order, of course, and it is by no means a complete list.

I’ve been reviewing a lot of test plans recently. As I review them, I’ve compiled this list of things I look for in a well written test plan document. Here’s a brain dump of things I check for, in no particular order, of course, and it is by no means a complete list.

That said, if you see a major omission, please chime in to let me know what you expect from or include in your test plans. These requirements may or may not work for you based on your development methodology, but it’s what I look for. I’m sure I’ll think of several more points as soon as I publish this post!

Test Strategy

I always like to see the testing strategy called out early in the test plan. In general, it’s always best to vet your testing strategy with your team as early as possible in the product/feature cycle. This helps set the stage for future test planning discussions and most importantly, it gives your teammates an opportunity to call out any flaws in the strategy before you’ve done a bunch of tactical work such as creating individual test cases and developing test automation. Some key points I look for in this section are:

- An Understanding of the Feature Architecture – For the bits you are testing through “glass box” approaches, reflecting a simple understanding of the feature architecture in the test strategy section is very helpful. I don’t expect to see a full copy of the feature architecture spec—just the “interesting” points called out and how the tester is using that knowledge to create an efficient testing strategy. For example, if the UI build is using MVC, a lot of testing can be done “under the hood” against the controller rather than driving all the tests via automation through the UI.

- Customer-Focused Testing Plan – Are you going to do any “real world” testing on your feature? Some examples include “dog-fooding” (either using the product yourself or deploying frequent drops to real customers to get feedback), testing with “real” data, install/uninstall testing on “dirty” machines (as opposed to cleanly installed VMs, for example).

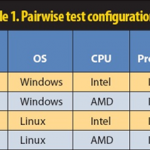

- Test Case Generation Methodology – List the approach you’re taking to generating test cases. Are you using the typical “sit around a table and brainstorm” approach? Model based testing? Pairwising? Automatic unit test stubbing tools?

- Identification of Who Will Test What – For example, developers will write and run unit tests before check-ins, testers will focus on system level, integration, and end-user scenarios, etc. This serves multiple purposes: reducing redundancy, driving accountability for quality across the feature area.

Testing Risks and Mitigation Plans

What known risks are you aware of? Some examples might be building on top of a new platform/frame-work that hasn’t been fully tested yet, tight schedules, or relying on components delivered from external sources or other teams. Once you’ve called out the major risks, list what you are doing to mitigate the chances each one could impact your project? For example, do you have escalation paths identified in case you find bugs in those external components?

Testing Schedule

Ideally, this would be merged into a common team schedule so you can see dates from each discipline alongside each other. Dates/testing events listed should include dependencies (e.g. Test Plan Initial Draft Complete” probably depends on “Feature Architecture Spec Complete” and “End User Scenario Spec Complete” dates being hit). Sharepoint is a great place to keep a team calendar for feature development, and the test plan can simply contain a link to the current schedule.

Test Case Details

Test Case Details

Each test case listed should be written in such a way that someone who is only loosely familiar with the feature (and armed with the feature specifications) can execute the test cases. This is helpful for several reasons: churn among testers on the project, it facilitates hand-offs to test vendors or servicing teams (if you’re lucky enough to have one), and most importantly, clarifying what is and isn’t being tested by reducing ambiguity in the test plan.

- A clear description of the test case and steps

- A description of expected output/behavior

- Things to verify to prove the test case worked (or not, in regards to negative testing)

- “Clean up” steps, in a situation where the test changed something that might invalidate the entry point for other test cases

- Mapping back to an end-user scenario (this can be accomplished by grouping your tests by scenario, for example)

- Priority of the test case (from the end-user’s perspective usually, though there are an infinite number of ways to prioritize)

And last but not least…

Make sure you use the right cover sheet! Seriously, if your team has a test plan template, by all means start with that! Chances are it’s much more than a “TPS report.” Templates give you a framework to help you think through your test planning process. You probably don’t need to include each and every section in your final plan—just include the sections that are relevant to the feature/product you are testing.

There you go. I hope this helps you think through your own test planning process.